Assuming Risk – Artificial Intelligence on the Battlefield

Artificial intelligence and machine learning (AI/ML) have promising potential to bring faster, more accurate analysis to enable holistic decision-making on the battlefield. With the exponential increase of data from numerous battlefield sensors, AI/ML are expected by their proponents to reduce the “sensor to shooter time” significantly. This increased speed and accuracy promises to save the lives of soldiers and civilians alike. While soldiers make mistakes—whether through a lack of information, misinterpretation of information, or biased information—AI/ML may reduce human error and flawed conclusions through objective data collection and analysis. With examples of human failure littering the news during the past 20 years of the war, the “coming AI revolution” appears to be inevitable as a technological approach to these problems and as a means to reduce the risk to populations, mission, and force (pp. 188-90).

The promise of AI/ML to improve speed and accuracy, thereby saving lives, is not without concern. Broadly speaking, AI works by applying computer processes to large amounts of data generating an “output”—a usable finding, connection, or pattern. To produce these outputs, AI relies on a variety of tools including computational power (computer processing speed); sensor data; algorithms; data analytics; robotics (autonomous systems); and machine learning among others. To enable these processes, AI and its associated algorithms need to rely on more, good quality data to fine tune weighing, classification, and outputs (p. 29). Long known to require large datasets, AI/ML have been shown to reflect certain biases or be deceived through relatively simple, physical-world interference.

As the input data and outputs from prior layers move through various computational processes, the computer self-adjusts the weights and probabilities as it “rewards” itself for correct outputs. Computers make probabilistic assessments regarding the next set of outputs and reweighing data based on the differences between outcomes and expectations. Bias in the collection of data, in the set of instructions or calculations for data analysis, or in the probabilistic outcomes could affect the level of confidence that commanders have in the output. This post considers how these bias issues should be addressed under the law of war by distinguishing those created in the input layer from the concerns arising in the process layer and outputs as AI/ML are operationalized for battlefield use.

Input Layer Problems

In an effort to reduce sensor-to-shooter time, the U.S. Army has tested the FIRESTORM (FIRES Synchronization to Optimize Responses in Multi-Domain Operations) system during Army Futures Command’s Project Convergence. This system’s ability to collect feeds, combine them using algorithms, and to distribute the combined feeds to other connected systems for firing solutions—all automatically—is revolutionary. In essence, FIRESTORM computers collect and process the data through automated surveillance as well as computer- and human-driven re-taskings. Similar programs, such as GIS Arta, are currently used by Ukrainian forces in their war with Russia. Through assistance from the United Kingdom, the Ukrainians have fielded a mobile program using commercially available technologies to identify, report, target, and engage Russian positions.

While systems such as FIRESTORM and GIS Arta certainly reduce sensor-to-shooter time—in some cases from 20 minutes to 20 seconds—it is important to understand the uncertainty and risk this reduced sensor-to-shooter time injects into a typical targeting process. These systems collect from manned and unmanned platforms and aggregate those data by a series of algorithms that seek patterns and recognize preprogramed equipment. As the input data cascade through the algorithm layers, the computer makes probabilistic determinations at each step to move the data along to the next gate. In this process, the computer only processes the data it has access to—in other words, its “known knowns.” What raises the level of risk in this analysis are the “unknown unknowns”—data the computer does not have and that it does not know it needs to precisely drive the most accurate analysis. This can be a significant risk as human-based analysis can better surmise the need for missing information, whereas algorithms, by their design, are limited to programed processes within existing data.

As data move through the input process, the computer relies on the dependability and integrity of those data. The problem of source data validation is a key consideration when the system becomes automated with limited integrity checks from outside systems or people. Here, it is possible to compound flaws in probabilistic determinations, relying on potentially faulty data at multiple points as the same data cascade from input through analysis. If a piece of data is miscoded (e.g., “armored personnel carrier” v. “school bus”), this error will drive additional subsequent errors in the use of those data. Put bluntly, “garbage in, garbage out.” This problem is unique to AI analysis—vice analysis conducted by an all-source intelligence analyst—in that the quantity of data put through a single system and possibility for compounding errors based on misidentification are internal system processes when compared to the traditional system involving multiple individuals capable of less throughput.

To mitigate the risk posed at the input layer, a method is needed to review the raw material relied upon—as opposed to the analytical product—through a process outside the target acquisition/engagement algorithm, essentially a quality assurance/quality control (QA/QC) mechanism.

For example, a separate algorithm or human auditor could check a select subset of the input processing determinations to ensure accuracy. While perfection is not required in the targeting process, to maintain the usefulness (and ultimately, lawfulness) of AI/ML systems, it is necessary to spot check sources to ensure a good faith use of input data in compliance with the law of war. It is essentially an application of feasible precautions to the use of computer algorithms for targeting and perhaps a corollary duty to operation of the “Rendulic Rule” which shields decisionmakers from liability based on unavailable information (U.S. Department of Defense, Law of War Manual §2.2.3.3; Appendix to John W. Vessey, Jr., Chairman, Joint Chiefs of Staff, Review of the 1977 First Additional Protocol to the Geneva Conventions of 1949, May 3, 1985).

Processing Concerns

In deep learning, computers adjust weights and uses of data based upon internal reinforced learning, in effect rewriting the processes during the analysis. To properly assess the risks of using the AI program, the commander and those operating or relying on the system must have at least a basic understanding of the processes involved.

The U.S. Department of Defense Law of War Manual makes clear that in conducting hostilities in accordance with the law of war, “[d]ecisions by military commanders or other persons responsible for planning, authorizing, or executing military action must be made in good faith and based on their assessment of the information available to them at the time” (§5.3.2). “Good faith,” while not defined in the Manual, requires, at a minimum, the commander consider the information reasonably available. For example, intentional ignorance of system risks or limitations seems to fall short of good faith, while briefings from knowledgeable parties could provide support of having acted in good faith. The question is, while acknowledging omniscience is not the standard, how much knowledge about the AI/ML system does a leader need to be reasonable and to satisfy the obligation to take feasible precautions.

To faithfully discharge their duties and obligations in the targeting process, commanders cannot turn a blind eye to patterns of misidentification or nonsensical outputs derived from the processing layer. However, to maintain adherence to the law of war in good faith when using AI/ML, commanders must update their understanding based on their technical, tactical, and intelligence knowledge of the digital processes underpinning their decisions. For example, within ML, the algorithm can self-adjust based on internal feedback to achieve more “correct” results. Similarly, software updates can change parameters or complex processes in unpredictable ways. As the processing layer changes through use, commanders must have a Quality Assurance/Quality Control process to identify systematic errors in the AI/ML. With the technical ability to calculate error rates and audit AI/ML results, these technological advancements must provide feedback to the system operator to inform good faith use. Akin to the “one bite rule” under common law, good faith application of the law of war requires updates to one’s understanding of the operating environment rather than a static view. This is not to say a commander is allowed one “free” war crime before culpability, rather, a commander cannot ignore evidence of AI/ML-related errors and stand on prior testing to avoid culpability.

Prior to first contact with an enemy, training scenarios repeatedly test AI/ML systems. However, as contact with an enemy drives necessary software changes to remedy previously unknown errors, account for new operational realities, or simply preprogram new friendly or enemy equipment, the changed software lacks the luxury of extensive pre-conflict testing. As operational data drive change to the processing layer, soldiers or contractors will make necessary changes to the software to address a changing environment or emerging problems, are they inadvertently creating new issues as they solve existing ones?

A simple search reveals numerous instances of failed software updates across operating systems and manufacturers. While nuisances in the civilian world, these errors can lead to serious and unnecessary causalities in war. As these changes inject new processes to a complex battlefield, commanders must stay abreast of the impact these changes have on the decision-making process. Good faith in the use of AI/ML requires using the information available, by verifying some degree of system inputs and outputs, to assess the processing layer’s accuracy in the operating environment. Like all other updateable weapon and intelligence systems available today, knowledge of the update and the potential limitations or issues with such updates would support good faith determinations.

Using AI/ML Outputs

The outputs from AI/ML tools synthesize massive amounts of data into digestible, actionable pieces. However, these processes and the statistical probabilities associated with the outputs can predetermine outcomes through a veneer of objectivity or mathematical certitude. High percentages delivered with outputs give an air of officialdom to the result. Presented in this manner, probabilities reported as “high” can influence human judgement even in cases of discomfort with the computer-generated output. Supplanting a commander’s judgment with data-driven algorithms introduces additional risk to the specific mission and broader operational framework.

The U.S. Law of War Manual stipulates the responsibility of commanders by stating that, “[t]he law of war presupposes that its violation is to be avoided through the control of the operations of war by commanders who are to some extent responsible for their subordinates.” (§18.4.1) The same responsibility is prescribed under Article 87(2) of Additional Protocol I to the Geneva Conventions, which demands that commanders consciously exercise their judgment when incorporating AI/ML outputs in their decisions. While providing distinctive and useful information, reliance on computer algorithms does not suspend the commander’s inherent responsibilities to apply the laws of war in good faith.

To address the risk of blind deference to computer outputs, judge advocates should communicate the legal standards for targeting decisions to the commander and members of the targeting cell within the context of the technological capabilities being used. Just as a military judge instructs court-martial panelists (jurors) on the definition of reasonable doubt (U.S. Department of the Army, PAM 27-9, Military Judge’s Benchbook, para. 2-5), judge advocates should instruct those in the targeting process how the AI/ML output can and cannot be used, as well as the relevant requirements under the law of war. In fulfilling this task, it is incumbent upon the judge advocate to understand the system and its limitations to properly characterize and communicate the risk to the deciding commander.

Part of understanding the AI/ML system is knowing the inherent error rate and audit features of the software. As machines learn through data and generate probabilistic, but verifiable, outputs, the difference between the outputs and real world facts generates a measurable rate of error. Similar to a confidence interval or p-value in statistics, error rates can tell how much weight should be given to a single AI/ML output.

Coupled with measurable error rates, auditability instills confidence in the system by making it possible to understand how the particular output was formulated. While the intricacies of adjusting mathematical models are more than what a commander could reasonably be required to ingest on a battlefield, the presentation of AI/ML outputs with associated references to raw material would allow a relatively easy, on-the-spot validation of the AI/ML outputs. This check would also meet the commander’s requirement for good faith adherence to the law of war without slowing the engagement process, thereby managing risk to mission and force while accounting for the legal risks.

Conclusion

The Department of Defense (DoD) has set forth five principles for ethical AI, also known as Responsible AI (RAI). These ethical principles indicate that DoD AI should be: responsible; equitable; traceable; reliable; and governable. Underlying all of these principles is the idea of risk mitigation (U.S. Department of the Army, PAM 385-10, Army Safety Program, Glossary §II).

While these principles are insufficiently defined to create system design requirements, RAI is integral to the idea that risk is “directly related to the ignorance or uncertainty of the consequences of any proposed action.” The Army’s 2020 Artificial Intelligence Strategy provides five lines of effort to address the DoD ethical principles and risks associated with incorporating AI into battlefield technologies. These interrelated lines of effort are necessary to address the risks, including those described above, on future battlefields.

The risk analysis process in developing, fielding, and maintaining AI/ML infused systems must consciously derive information from real world applications of the systems and push that information back to leadership to reach tolerable levels of risk. Though it will directly impact the DoD and Army, this process cannot be a solely military or government function. Most of the technology and expertise for these systems resides outside of the government. According to the Army’s AI Strategy,

The public is a key enabler to the Army’s ability to meet Army AI goals and timelines. Our citizens need to know that our Army is using AI capabilities in an ethical, safe, and legal manner. They need to have trust, just as our Soldiers must have trust, in the Army’s design, development, and application of AI capabilities in accordance with our Nation’s values and the rule of law.

In accepting the risk of placing additional AI/ML on the battlefield, leaders must avoid the conundrum of developing systems that are legal and ethical in theory but violate the nation’s laws, policies, and values in practice. This will require continued focus at all stages of development across the system as a whole, from inputs through processing to outputs.

***

Major Timothy (Tim) McCullough is an active duty Army judge advocate currently assigned as an attorney-advisor working with artificial intelligence, position/navigation/timing/space, intelligence, and aviation for Army Futures Command in Austin, Texas. The views expressed are those of Major McCullough in his personal capacity and do not necessarily represent those of the U.S. Army or any other U.S. government entity.

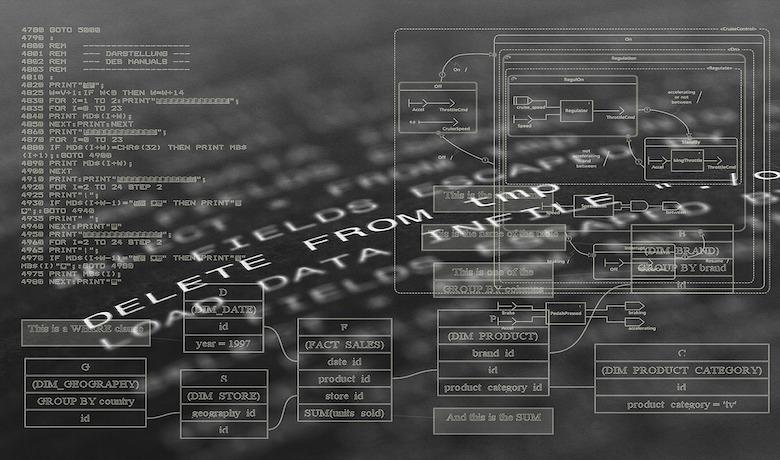

Photo credit: Pixabay